See The Conversation

Live captions, instant translation, and AI actions keep every conversation captured and searchable - on mobile, desktop, or AR glasses.

Conversation Accessibility

Conversation exclusion is an accessibility barrier

People are present in important conversations, but they cannot fully access what is being said, by whom, and what needs to happen next.

Who is excluded

Hard-of-hearing users

Can miss key details when speakers overlap, face away, or speak from different positions.

Users with memory-impact challenges

May lose track of names, commitments, dates, and what needs to happen next after the conversation ends.

Multilingual users

Are left out when language switching happens quickly and translation is delayed or unavailable.

Millions of people are affected

Conversation exclusion affects people at a massive scale.

0+

People worldwide live with hearing loss

0+

People worldwide live with dementia

0%

People in the U.S. who speak a non-English language at home

0+

People worldwide have disabling hearing loss

What Heard does

One platform for conversation accessibility

Heard captures live speech, identifies speakers, translates in real time, and turns conversations into summaries, actions, and searchable memory.

Conversation to Action

Conversation → Understanding → Action

Heard summarizes what happened, what was decided, and what needs to happen next.

Step 1

Capture

Record speech in real time with speaker separation.

Step 2

Transcribe

Follow live captions, speaker identification, and translation as conversation unfolds.

Step 3

AI Summary

Get a concise recap of what was decided.

Step 4

AI Actions

Launch one-click actions while context is still fresh.

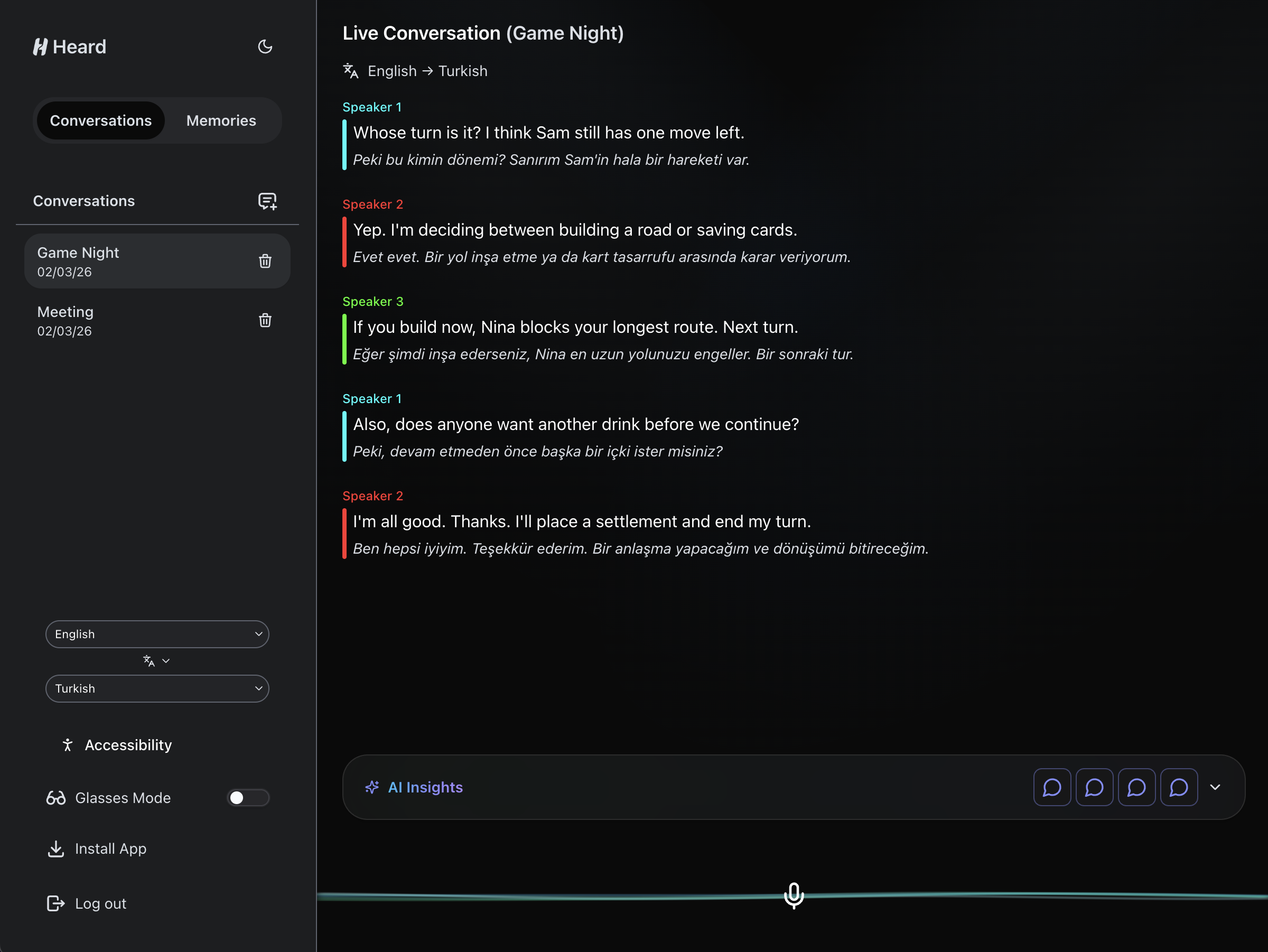

Transcribe & translate

Instant translation

Translate live across dozens of languages so people can stay in the same conversation in real time.

AI actions

Get actionable next steps

AI-powered actions based on the conversation can be executed with a single click, such as emails, messages, reminders, and calendar events.

RAG Memory Assistant

Ask any question about any conversation

Heard uses uses retrieval-augmented generation (RAG) to find the most relevant moments, then returns practical answers quickly. Retrieval works even when you have thousands of conversations saved. Search considers speaker, date/time, and location metadata when available. Answers map back to relevant moments so users can verify what happened.

You discussed the pilot rollout timeline, agreed to send the venue shortlist by Friday, and noted that Jordan will confirm interpreter availability.

Referenced memories

- Conversation 412 • Tue 7:42 PM • Stockbridge Grill — discussed rollout timeline and venue options.

- Conversation 409 • Mon 9:15 PM • Mentioned interpreter confirmation owner: Jordan.

Heard works wherever you do

AR glasses put you inside the conversation

Users can follow live captions and translation in peripheral view, keep eye contact, and stay present in fast conversations without switching attention to another device.

Beyond Hearing Accessibility

Accessibility settings are built in, not bolted on.

Users can tune text size, contrast, readability, and motion to match visual and cognitive needs.

See the conversation

Ready to experience Heard?

See how Heard helps you transcribe, translate, understand, and act on conversations in one continuous flow.